350,000. That’s how many people attended AI Expo 2023, a sea of innovators and thinkers drowning in possibility. And then, this week, a very real, very scary headline ripped through that digital hum: an attack on Sam Altman’s home.

It’s a visceral reminder that the dazzling abstractions of artificial intelligence – the algorithms, the neural networks, the promise of a transformed future – are ultimately embodied in flesh-and-blood humans. And when that human is the very public face of a company pushing the boundaries of what’s possible, the stakes, it seems, just got terrifyingly higher.

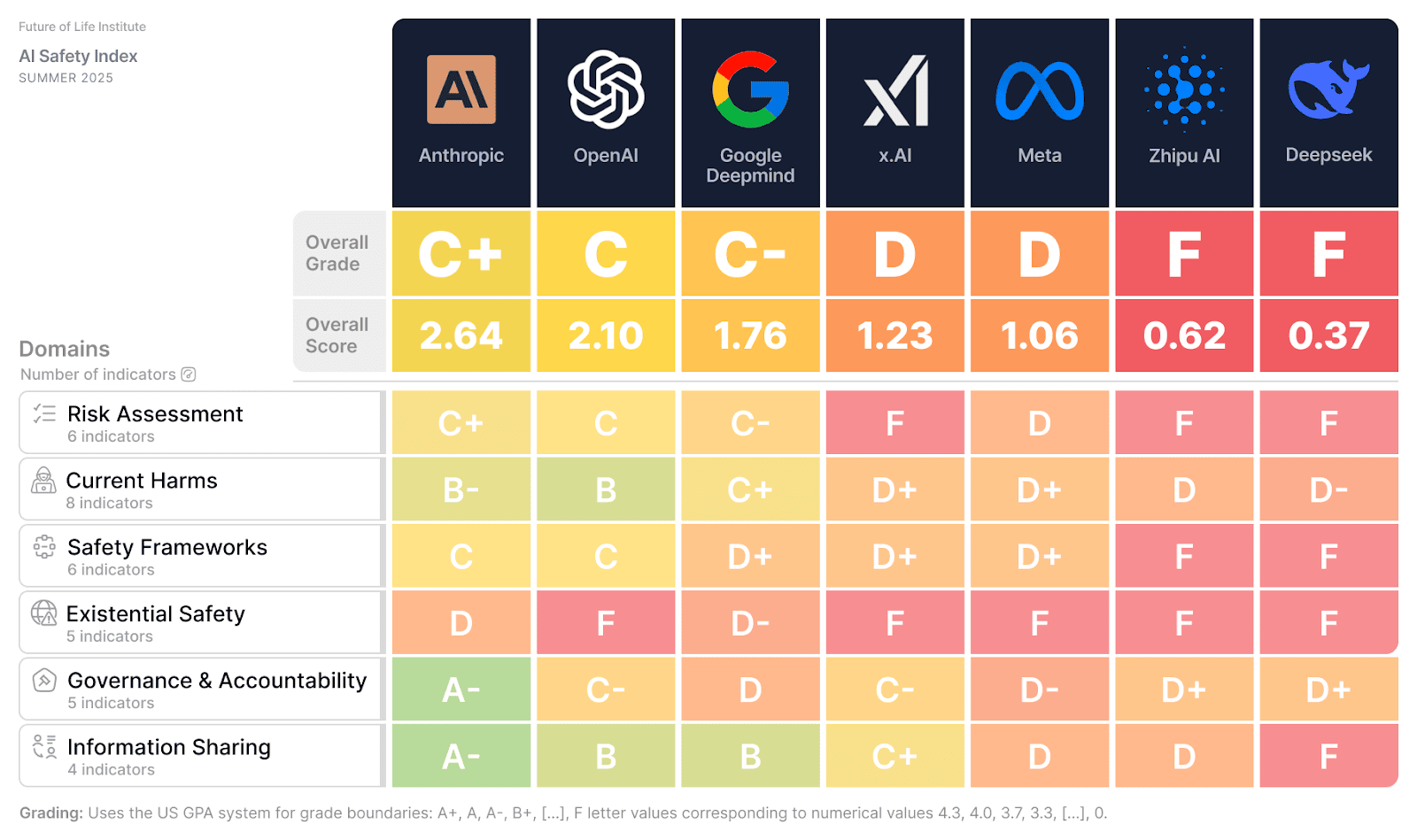

The Future of Life Institute (FLI), a name that resonates with anyone seriously pondering the grand arc of technological evolution, has stepped into the breach. Their President and CEO, Anthony Aguirre, didn’t mince words. This wasn’t just a news item; it was an existential jolt.

“We are deeply relieved that no one was hurt in today’s attack on Sam Altman’s home. Our thoughts are with him, his family, and everyone affected by this terrifying ordeal.”

This statement, stark and immediate, cuts through the usual corporate-speak. It’s the human element, stripped bare. No platitudes, just raw relief and empathy.

The Unacceptable Escalation

But the FLI didn’t stop at sentiment. They doubled down, making it clear that this wasn’t just a personal tragedy, but a societal one. The language is sharp, deliberate. “Despicable and utterly indefensible.” These aren’t the words you typically hear from an organization that, by its very nature, exists to navigate complex, often abstract, future risks. This is about the here and now.

The call for accountability is direct. “Urge law enforcement to hold them accountable to the fullest extent of the law.” There’s a sense that this is a line in the sand. The implications for the future of AI development, for the very people driving it, feel immense. Imagine the chilling effect on innovators who might now be looking over their shoulders, not just at market competition or regulatory hurdles, but at potential physical threats.

Violence vs. Vision

And then comes the kicker, the sentence that truly crystallizes the message: “Violence and intimidation of any kind have no place in the conversation about the future of AI.” This is the core of it, isn’t it? We’re talking about code, about data, about progress that could reshape our world in ways we’re still struggling to comprehend. And somehow, it’s been dragged through the mud of brutal, primitive aggression.

My own take? This event, as horrific as it is, might actually do more to clarify the ethical imperatives surrounding AI than a thousand policy papers. It forces us to confront the human cost, the personal impact. The FLI, as the “world’s oldest and largest AI think tank,” is uniquely positioned to bridge that gap between abstract risk and lived reality. They’ve been steering the development of transformative technologies since 2014, and this incident, I suspect, will only sharpen their focus on the very human stakes involved.

This isn’t just about protecting Sam Altman; it’s about protecting the very spirit of innovation, ensuring that the people building our future can do so without fearing for their safety. The future of AI, it turns out, depends on the safety and security of its architects. A sobering thought indeed, and one that demands a unified, unwavering response.

🧬 Related Insights

- Read more: Intel’s Raccoon-Infested Fab Roars Back: The Packaging Bet Targeting $1B+ Revenue

- Read more: Section 230: The Unsung Hero of the Open Social Web

Frequently Asked Questions

What is the Future of Life Institute (FLI)? The Future of Life Institute is a non-profit organization focused on guiding transformative technologies, particularly artificial intelligence, toward benefiting humanity and mitigating extreme risks.

Why is the FLI commenting on an attack on Sam Altman’s home? The FLI is commenting because the attack highlights the personal safety concerns for individuals at the forefront of AI development and underscores the need for constructive dialogue, free from violence and intimidation, regarding the future of AI.